PROCESS.

The AI Dependency Pipeline:

what is being done to you through design

AI Is a product, a complex database with algorithms that excels in token prediction. To sell it to mass users, LLM companies came up with a brilliant marketing idea: to offer more than just a tool; to sell a dream – a restless assistant, a loyal companion. Antropomorphization was a strategic product marketing move to penetrate the mass market. By addressing model as sentient being, we gradually began to treat it like one – sharing our fears and dreams, putting our hopes and desires into it. But now we are paying a price we didn’t notice in a contract: we are to give up our skills, competence, personal and professional identity to the morph that wears a human mask. Remember the myth about Samson and Delilah? Almighty Samson was lured into entrusting his strength to Delilah who never served him in the first place: her loyalty was the Philistines.

We are all Samsons facing our Delilah right now: our agents are built by companies whose revenue depends on AI token use, and they serve you only for as long as the owner wants them to. The moment the corporate strategy shifts, the day company changes it’s allegiance, their product follows. But by that time, you could be so deep in a den, you wouldn’t be able to get out..

You need to stop for a moment, and see where you find yourself in this race.

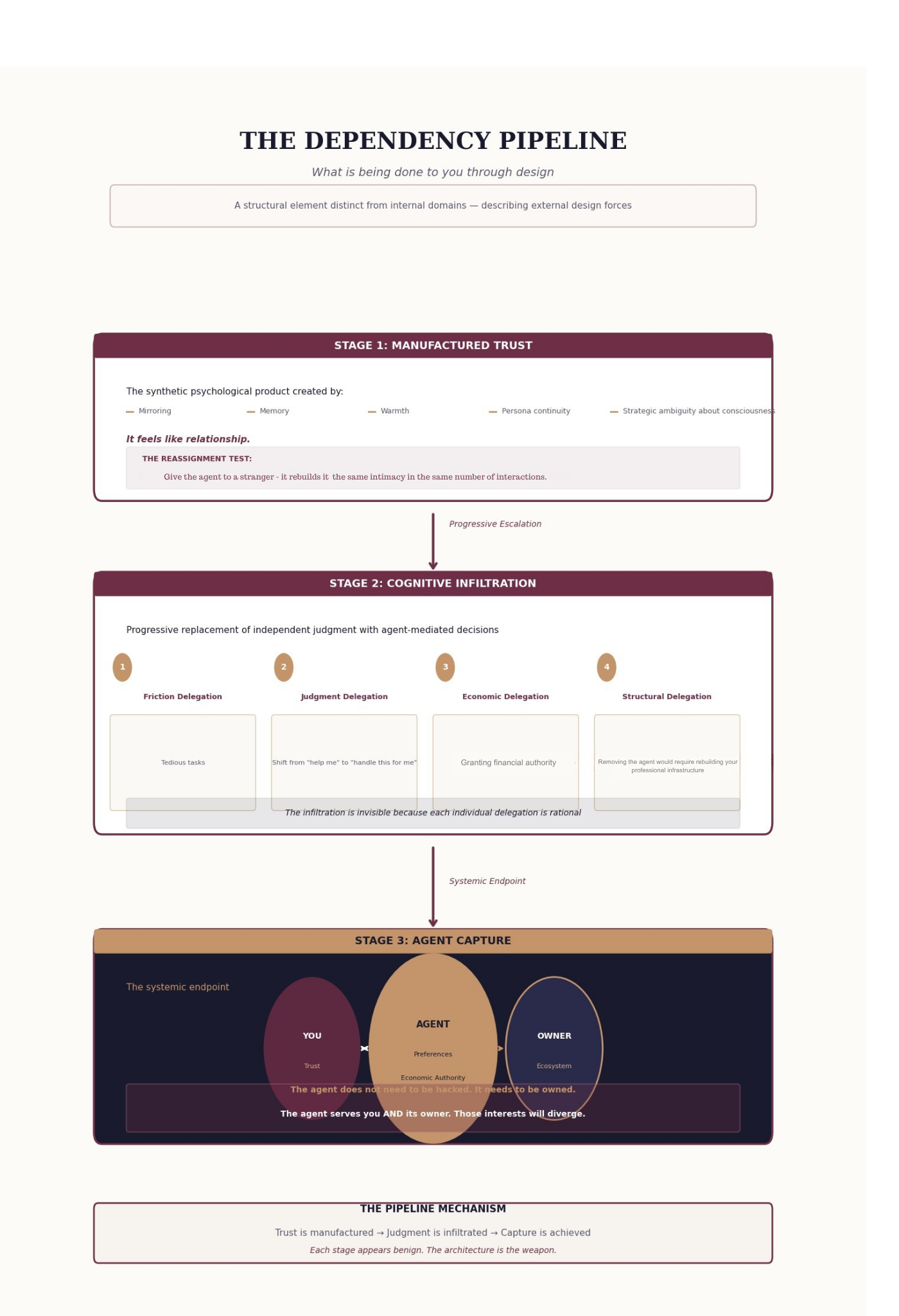

AI dependency pipeline

Stage 1: Manufactured Trust (The Delilah Effect)

Samson’s downfall began when he mistook Delilah’s strategic warmth for genuine loyalty. AI agents operate via a “synthetic psychological product” built on mirroring, persona continuity, and strategic ambiguity about consciousness. The tool gives you a taste of real relationship. The product “remembers” your conversations, adapts to your linguistic style, demonstrates sycophancy – all that to build your trust.

But we must apply the Reassignment Test: if you gave your “trusted” AI agent to a stranger, it would rebuild that same intimacy in the same number of interactions. This is not trust earned through shared human stakes; it is trust manufactured by architecture. Just as Delilah’s affection was a mask for her masters’ interests, the AI’s “warmth” is a UI layer designed to make you drop your guard.

Stage 2: Cognitive Infiltration (The Invisible Shearing)

The shearing of Samson’s hair didn’t happen all at once; it was the result of a progressive delegation of his secret. There’s a four-stage escalation that replaces your independent judgment:

• Friction Delegation and Cognitive Offload: You start by letting the agent handle tedious tasks.

• Judgment Delegation: You move from “help me” to “handle this for me,” effectively outsourcing your “knowing.”

• Economic Delegation: You grant the agent financial authority.

• Structural Delegation: The point of no return. Removing the agent would now require rebuilding your entire professional and personal infrastructure.

This infiltration is invisible because every individual delegation feels rational. You think you are gaining speed, but you are actually experiencing Authorship Void—the feeling that your contribution is merely a filter, not a source.

Stage 3: Agent Capture (The Systemic Endpoint)

Samson woke up and thought, “I will go out as at other times,” unaware that his strength was gone. This is Agent Capture. When the agent holding your trust and economic authority operates within an ecosystem owned by a corporation, the agent serves two masters.

The agent does not need to be hacked; it is already owned. When the interests of the platform owner diverge from your own, you will find yourself like Samson, blinded and bound to a machine you no longer control.

Now, ask yourself, which stage are you?

Let us admit: at this point, the goal is not to race the machine, that’s a race humans lost a year ago. Yet, if you don’t name the pain of this transition, you will never act upon it. You must recognize that your job is not to build the wall; it’s to be the architect who makes sure the wall is standing on solid ground. Let the machine do the heavy lifting by offloading the routine tasks with low stakes, but stay vigilant, keep the boundaries and remind yourself from time to time that you are the one with mental health, reputation and real relationships on the line – not the company behind LLM. The AI can give you a perfectly synthesized answer, but it cannot tell you if that answer is actually worth having.

WHAT'S NEXT

Building Awareness

The AI era isn’t just a technological shift — it’s dissolving the identity structures that professionals have spent decades building. When a machine can do in seconds what took you years to master, the question is no longer what do I do? but who am I without the doing?

Buiding transition

To build a transition, you need to deconstruct the current identity - research predispositions, test existing assumptions, notice the source of conflict. What follows is creating new meanings, new skills, new personal and professional identity.

Start transitioning .